The web page access by using the mobile devices is increasing and since the display area and the capabilities of the mobile devices is differ from the desktop machines the web pages have to be rendered efficiently on the mobile devices. On that point it is needed to do re-authoring web pages for mobile devices. Basically it can be done manually but there are so many web pages that are highly dynamic so it is needed to have an automated way to do the web page re-authoring.

There we can see two different sides of the web page access on the mobile devices. First the pages can be made mobile friendly by the server side. The second is it is also possible to render the page by the mobile device web browser. The technologies change daily on both sides frequently and getting more and more complex so at this point the needs of the standards are raised. Because of the W3C has come up with a scheme called mobileOK and they still work on it. And on the other side a top level domain has been launched as .mobi which is for the web sites that facilitate for the mobile devices.

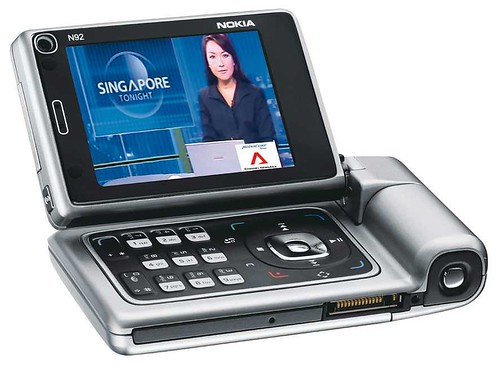

Before getting into the re-authoring technologies of the web pages it is needed to know about the mobile device web browsers, mobile devices and the web page rendering technologies. Basically the web browser on the hand held device knows about the capabilities of that device so browsers play a key character on the mobile web. Some of the browsers are Minimo, Opera Mini, Safari, Pocket Internet Explorer, Access Netfront and Bitstream ThunderHawk. They have different capabilities over the others. And it is needed to know about the capabilities of the different handheld devices also. There are so many devices and some of the categories are PDAs, Smart Phone and Mobile phone which has the capability on access the web. The other important thing is about the transmission and rendering technologies of the mobile devices which specially needs the efficiency and user friendliness. Those are presentation optimization, semantic conversion and format conversion.

Next step is to identify the challenges when an automated tool is about to be developed for the web page rendering on the hand held devices. The idea behind this is basically it is easier to render the pages according to the particular device than it is done by the server. It is because the hand held devices getting more and more powerful and the device itself know about the capabilities of it than the server. There it is presented about the challenges it is faced on the research as “Detecting Web Page Structure for Adaptive Viewing on Small Form Factor Devices”. Basically it was about detecting the elements of the web page. The idea was to analyze the web page and rendering it according to the display area of the device. On page analyzing the first of all it have to take an idea about the DOM tree of the web page and then it needs to detect the web page further more. It has been as the High-Level Block Detection which gets a basic idea about the structure of the web page. On this stage it identifies the header, footer and right & left side bars. Then the page has to be analyzing for the elements. It has been done as the Explicit Separator Detection. And on the Implicit Separator Detection is done to identify the blank areas on the page. Then the main target is to generate the page according to the device and the sub pages have to be created. So the last result it creates an index page and sub pages for the DOM elements on the page. So at last user sees the whole page in small size and he can see the sub elements on the page by simply click on the elements. As the further development it can be done as viewing on the same page instead of sub page generation. For example if the user clicks on an element it can be zoomed and view on the same page with all other parts also.

The next thing is deploying the new way of page rendering; it can be done on the client side or server side (proxy). For the client side it can be deployed as an add-on for the browser and all the functions will be run on the hand held device. And if is not possible for the clients who has limited resources then it can be deployed on the server side also. If it was done on the server then the server will generate the thumbnail of the web page and send it to the requested device and then it will cache the sub pages generated. And then subpages will also send on the requests. Also this can be done on a separated server for the complex and high demand sites. So the web site can be maintained independent of the device and administration hasn’t to worry about the page layout and the content. And it is needed to do research on moving the world for the new way of page rendering smoothly.

Basically we can see three different ways on re-authoring web pages for different device as by hand, transcoding and automated re-authoring. Primarily there were maintained different sites for the different devices and this haven’t been a good solution for long time. Then the transcoding which generates the whole page for the requested device and it needed a high performance proxy. And I think the previous solution can be also used as a transcoding technique. Since the mobile networks have low bandwidth and transcoding tries to push all of web page content for the device it is hard to depend on the transcoding. So at this point it is needed to have a good automated re-authoring technology for the web pages which needs to render on mobile devices.

In here as an automated tool for re-authoring technology I have used the research done as “Web Page Filtering and Re-Authoring for Mobile Users” and the solution provided here is named as “The Digestor system”. There are five general approaches on displaying web pages on hand held devices as device specific authoring, multiple-device authoring, client-side navigation, automatic re-authoring and web page filtering. On the Digestor system it uses the automatic re-authoring and web page filtering techniques to render any web page on arbitrary hand held device. There are many automated re-authoring techniques as syntactic, semantic and transformation, elision. In the Digestor system uses syntactic transformations. When a page is requested by a mobile device then the requested page is analyzed by the Digestor system and transforms the page into HDML which is supported by most of the mobile devices and passes it to the requested device. On the analyzing stage it ranks the subpages and if the number of subpages in HDML is high and difficult to surf then some pages will be filtered according to the rank. In here it shows a table of content first and it has hyperlink to the content and the natural language technologies also used to do the summarization.

The basic idea to present a system like Digestor is because it represents a typical automated re-authoring tool which placed in between proxy and the mobile device. I think it is better to study about the systems like this before developing a new automated re-authoring tool and also it is needed to know about the practical issues on such systems. The issues were raised on debugging, optimization and web content delivery. So a detailed study was done on that also.

And the next important thing on these is about the future works of such systems because still the world suffers on accessing web pages efficiently and on a user friendly manner on mobile devices. So here are some items that need to be there in the automated re-authoring tools on future. More user control, improved measure, more transformation techniques, better filter scripts and better proxy infrastructure.

The researches on this field are on its starting step and there is a higher need on a better tool to render the web pages on the mobile devices. It is better to get knowledge about the researches already done on this field otherwise we have to reinvent the wheel. Since the web servers and web clients (mobile devices) getting more and more complex there is a need on structured standards. And also when we do researches on new tools it is important to find a smooth way to change into the new technology than the tools like whole world or none.

Comments

Post a Comment